So Nerdy Planet #8

Hello, Dear Nerds,

This week, everything is about Apple's presentation and how "not innovative" but for some reason "cool" new iPhones. I won't stop on this, since if you are interested, you probably read or watched some reports on this already!

That means that today we will speak about:

- New report from LeadDev

- One more nation in the AI race - United Arab Emirates

- Sam Altman, Tucker Carlson, and dead whistleblower Suchir Balaji

New report from LeadDev

Well, here we are again. Another shiny report trying to tell us what AI really did to engineering. This time, LeadDev surveyed 883 engineering leaders worldwide, and the results are… both exciting and depressing at the same time.

What is LeadDev? LeadDev is a global community and resource hub for engineering leaders, providing content, conferences, and virtual programs to help them learn about team management, technical strategy, process improvements, and personal development. Basically something I am trying to create with sonerdy.me, but better :) (hopefully)

The Good News Everyone (c) Futurama

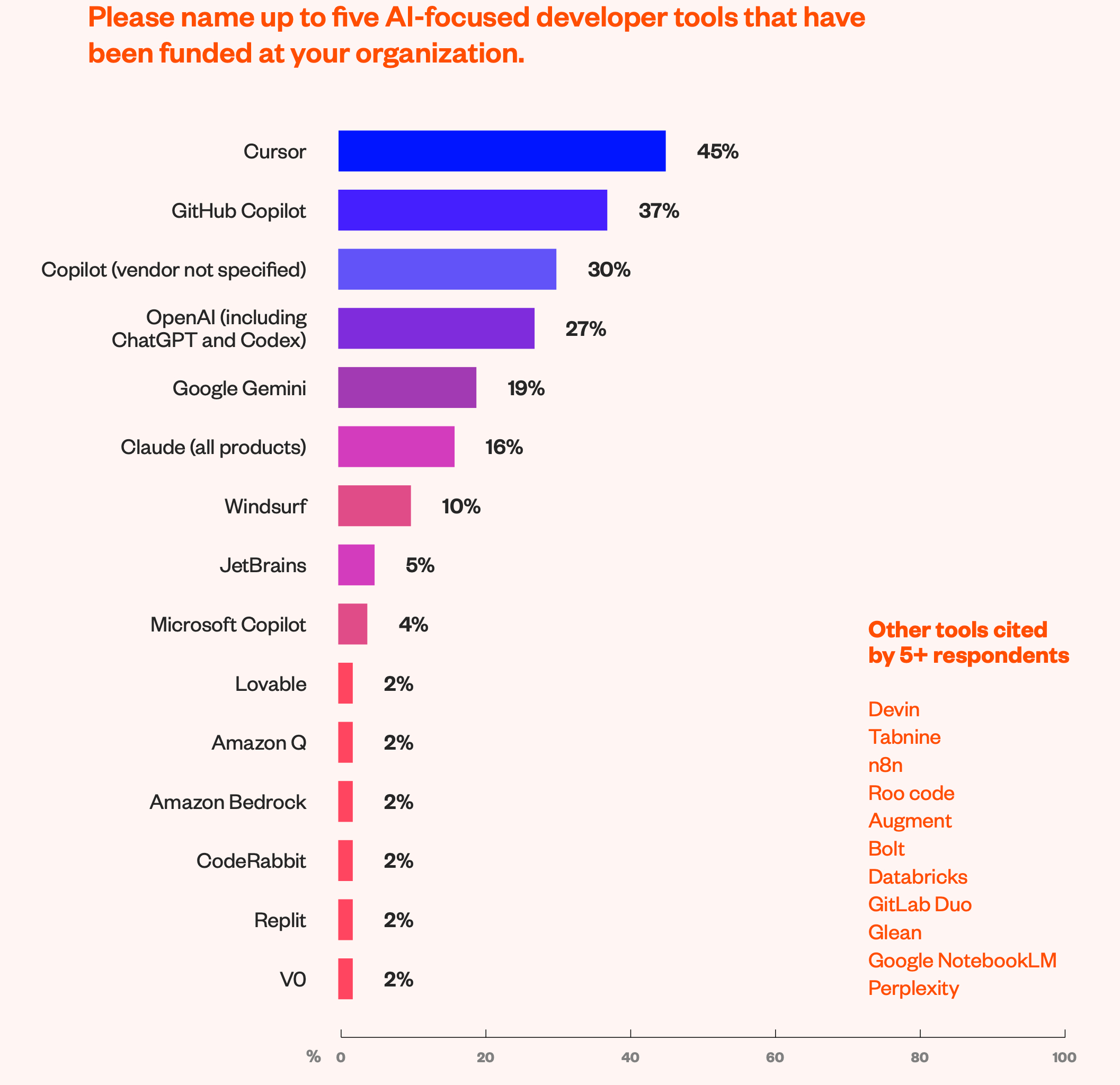

Let’s start with the positive. 66% of organizations already adopted AI tools in production. Cursor is the new rockstar editor (45% adoption), followed by GitHub Copilot (37%) and the usual suspects: ChatGPT, Gemini, Claude .

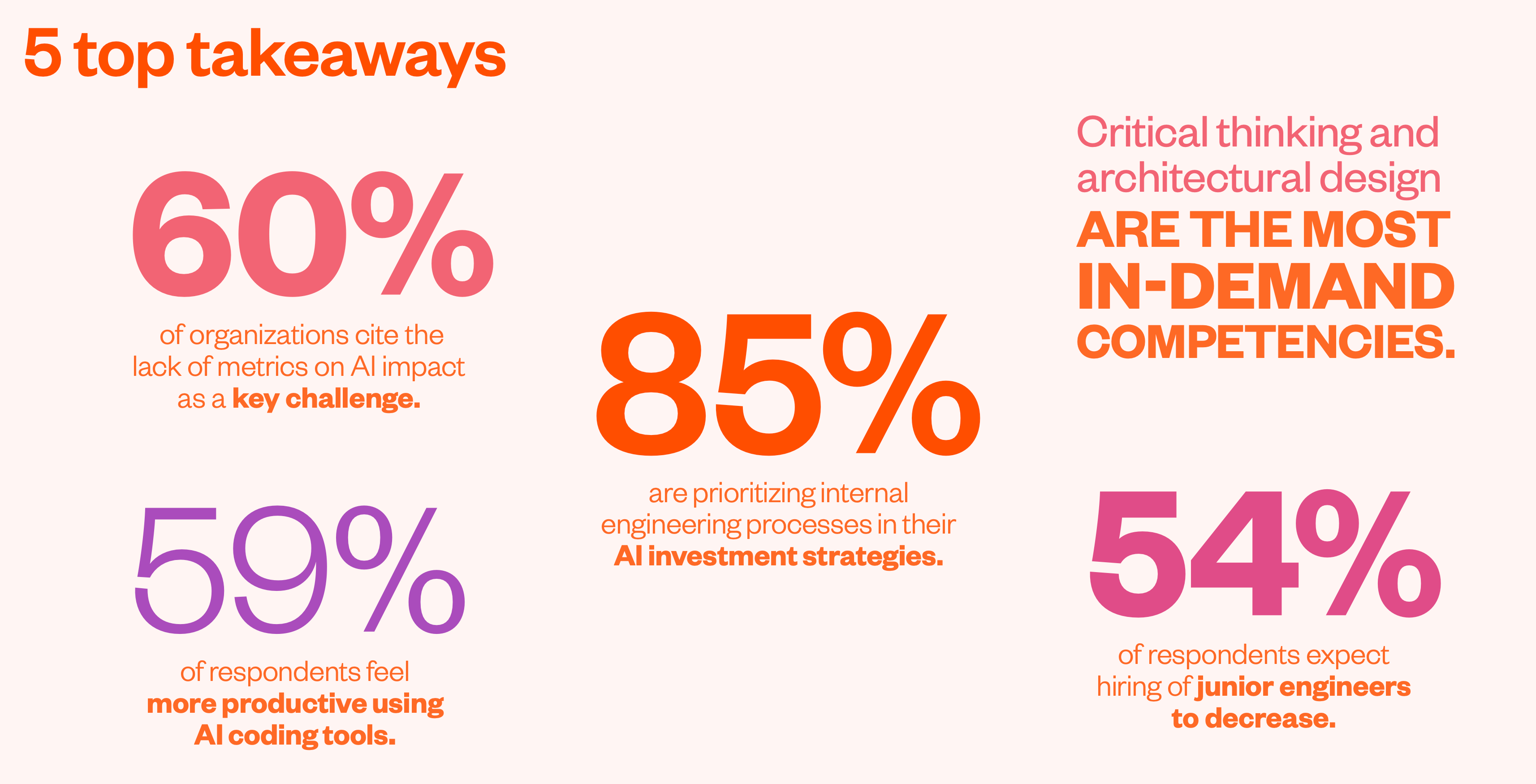

And people do feel better: 59% of respondents say they’re more productive, with small teams reporting up to 25% boosts. Even better, tech debt doesn’t seem to explode as everyone feared — actually, 23% say it went down (probably those who finally forced Copilot to write tests for them).

Budgets? Big organizations spend $100k+ on AI models like pocket change. Medium-sized ones stay modest, investing $5k—$25k a year. Basically, the bigger the company, the bigger the playground.

It is funny to note that this is very contradictory with the MIT Report we discussed two weeks ago. But, well, that report was mostly about sales organizations, not tech ones. Techs are winning here.

The Dark Side: Juniors, Beware

But here comes the fun part. 54% of leaders believe AI means fewer junior engineers will be hired long term. Why? Because if AI can handle boilerplate and bug fixes, juniors lose the one entry path they had. On top of that, mentoring drops: 38% of leaders admit they spend less time teaching juniors because “AI can help them.”

Translation: Juniors are being trained by autocomplete. Good luck building engineering fundamentals when your teacher is a chatbot that sometimes hallucinates semicolons.

Once again, there is nothing new here. I expect more boot camps for juniors to close soon. Getting a job for new professionals will be much harder with the development of AI capabilities as coding assistants.

Skills That Actually Matter

Forget “learning to code faster.” Leaders now value critical thinking (43%) and architectural design (34%) more than ever. In plain words: AI can write functions, but it still can’t decide what the system should look like. The rise of “agent managers” and prompt engineers (a.k.a. “AI whisperers”) proves this point.

So, while juniors fight with Copilot, seniors are expected to step up as system thinkers and babysitters for AI agents. Or I would call it AI-agents Managers - now you will understand the pain of your engineering manager when he tries to tell what they want from you :)

My Take

This report confirms what many of us feel daily: AI is great at productivity theater. You see more commits, faster outputs, and fewer boring tasks. But the structural issues — hiring, mentoring, and long-term engineering quality — are getting trickier.

The irony? Leaders complain they don’t have proper metrics (60% say measuring AI impact is a nightmare), but they’re still cutting juniors first. Classic.

The majority of respondents (60%) named a lack of clear metrics for AI’s impact on productivity or quality as a key challenge. When explicitly asked if their companies were currently measuring the impact of AI tools, only 18% attested that they were, with 40% still assessing the best route – suggesting that the issue may lie in nailing down the right metrics to track.

So yes, AI is here, it’s useful, and it’s changing workflows. But remember: AI won’t replace you, but it might kill the pipeline of people who could’ve replaced you.

United Arab Emirates joins the AI-race

So, we have a new player in the AI market—a model from the UAE, Mohamed bin Zayed University of Artificial Intelligence, in collaboration with Emirat tech conglomerate G42 - K2 Think.

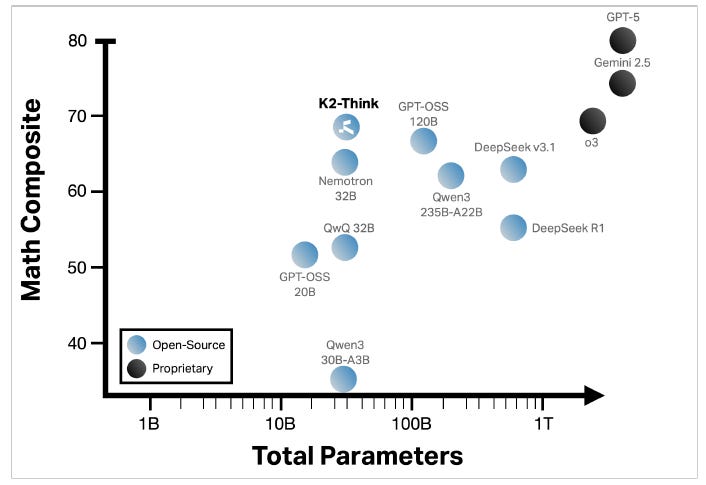

K2 Think is relatively small, with only 32 billion parameters. However, the results shown are not that bad and, in some places, can be better than the ones from OpenAI and DeepSeek.

What is also interesting - it runs on Cerberus chips ( not NVIDIA ). K2 Think is not the first model running on these chips, Mistral was there first :) It looks like there will be some companies competing with NVIDIA's dominance, tho, for now, only on inference ( NVIDIA is still the king in training )

From the K2 Think page, by the words of the authors:

K2 Think is the world’s fastest open-source AI model and the most advanced open-source AI reasoning system ever created. At just 32B parameters, it delivers frontier-level performance with small model efficiency – fast, lean, and powerful enough to solve complex problems step by step. Co-developed by MBZUAI and G42 and powered by Cerebras, K2 Think brings world-class AI reasoning to all, combining speed, clarity, and openness to transform how we understand, plan, and act.

We will do a deep dive on how K2 Think was trained in some of our next issues, but from what we can say now:

-

Efficiency over brute force: Smaller model, but smart architecture + clever training = something that competes without needing the multi-hundred-billion-parameter wallet. This is exactly the kind of move the rest of us have been waiting for - AI that doesn’t cost a fortune to run but still does the heavy lifting.

-

Sovereign AI flex: UAE isn’t just buying in, it’s building. This adds to their growing portfolio (Falcon Arabic, etc.). They’re aiming for global recognition, being a player in shaping how AI governance, openness, infrastructure works—not just being a market. So, now in the scope of open-source / open-weight models, we have THREE major players - USA with OpenAI and Llama, China with DeepSeek and Qwen ( K2 Think is based on it ), and UAE with K2 Think.

-

Open-source strategy as soft power: By releasing K2 Think openly (with transparent training & data), the UAE gains credibility, attracts researchers, developers, and partnerships. It’s not just about beating others; it’s about influencing the norms of the AI arms race. As we've discussed in "So Nerdy Planet #3," open-weigh models serve many reasons to exist from a geopolitical sense.

But for now, let's wait a bit more for the results of testing. It is obvious that K2 Think won't be used as a multi-purpose model; rather, it can be a good reasoning model to solve mathematical problems. The only question is how good it can be

Sam Altman, Tucker Carlson, and dead whistleblower Suchir Balaji

Tucker Carlson, no matter what you feel about him, has the luxury to interview the most influential or famous people in the world. He took an interview with Pavel Durov, listened to mad history from putin, and now spoke with Sam Altman. I can't say that his understanding or discussion of AI is different from tons of other interviews Sam Altman did, so nothing particularly interesting there.

What was really interesting was a part of a conversation about Suchir Balaji.

Who is Suchir Balaji?

He was a researcher at OpenAI for ~ 4 years. He left OpenAI in August 2024 after raising concerns about copyright violations: his argument was that OpenAI’s models may have been trained on copyrighted material improperly - basically stealing.

On November 26, 2024, he was found dead in his San Francisco apartment. Authorities ruled it a suicide: self-inflicted gunshot wound. Police/medical examiner states “no evidence of foul play.”

However, everything about this story was very unusual:

-

Reports mention blood in multiple rooms, cut surveillance wires, and even signs of a possible struggle.

-

Balaji had just come back from vacation, ordered food, and was making normal life plans — not the usual signs of someone preparing to end things.

-

No suicide note was found, which always leaves more room for speculation.

What with Altman?

In interviews Carslon tried to get at least something from Altman, by asking questions about unusual crime scene, tho Altman was standing his ground, in his unconfident manner: he was telling that he believed that it was suicide, mentioned that Balaji was his "almost friend", and that he is not sure if this is OK to speak about this sensitive topic. Even though Carlson had already spoken with Balaji’s family, he pressed Altman with their questions directly during the interview.

In the end, Altman, pushed into defending OpenAI and himself, was visibly uncomfortable. He said it sounded like an accusation, but he stuck by the official ruling.

What I was expecting to hear was more willingness on Sam Altman's part to find all the details of this incident.

Why?

Even though we've already witnessed "Facebook files," "Uber files," and so on, tech companies are still considered "Good" rather than "Big Corporations that can do anything for more money." Even OpenAI, which is now considered one of the most important companies for Humanity, is considered good.

That opens many questions:

-

Whistleblower safety and trust—If people raising allegations inside big AI companies feel at risk or don’t believe they’ll be protected, that chills the kind of transparency we (Humanity) need.

-

Corporate accountability – When someone accuses a company of wrongdoing and dies under contentious circumstances, there’s a heavy burden on that company (and public institutions) to ensure the investigation is fully credible.

-

Public perception of AI – Already, many are skeptical of tech’s ethics, data usage, copyright issues, etc. A story like this amplifies distrust, especially when alt-right/conservative media gets involved (Carlson etc.) or when high-profile figures like Musk stoke the fire.

-

Legal & regulatory consequences – Because Balaji was named (or potentially a witness) in copyright lawsuits vs OpenAI. If there are leaks, data, or documents yet unexamined, this could reopen legal challenges or press for stricter oversight.

From the personal note: Altman's answers are increasingly looking like ChatGPT answers. They are polite and respectful, but they do not provide answers to "what they really think." That can be terrifying.

Book Recommendation

Once a week, I will recommend a book that I find important for every manager to read.

Managing the Unmanageable: Rules, Tools, and Insights for Managing Software People and Teams( Amazon )

The book, which is popular among EMs, contains tons of scripts and templates for day-to-day life.

Community News

We had our fourth Bi-Weekly, during which we covered a wide range of topics, including compensation discussion, budgeting, and building the team's vision.

Also, the Community now has access to templates and examples on how to build a good Mission and Vision document.

Please check sonerdy.me on how to get access to our Community, where you can access all recordings of our bi-weekly sessions, a list of templates, and courses!

Responses